The harness is becoming the business model

Anthropic’s latest Claude Code pricing change is a signal that the value in AI is moving outward — from the model itself to the harness around it.

Anthropic’s decision to charge extra for Claude Code usage through third-party harnesses like OpenClaw looks, on the surface, like a pricing change.

I think it is something more important than that.

It is a signal that the value in AI is moving further away from the model itself and further into the layer around it.

For a while, most of the AI conversation has been trapped in model talk. Which model is smartest. Which one is fastest. Which one writes the best code. Which one wins the benchmark this week. That still matters, obviously. But I think many people are still putting too much weight on that layer alone.

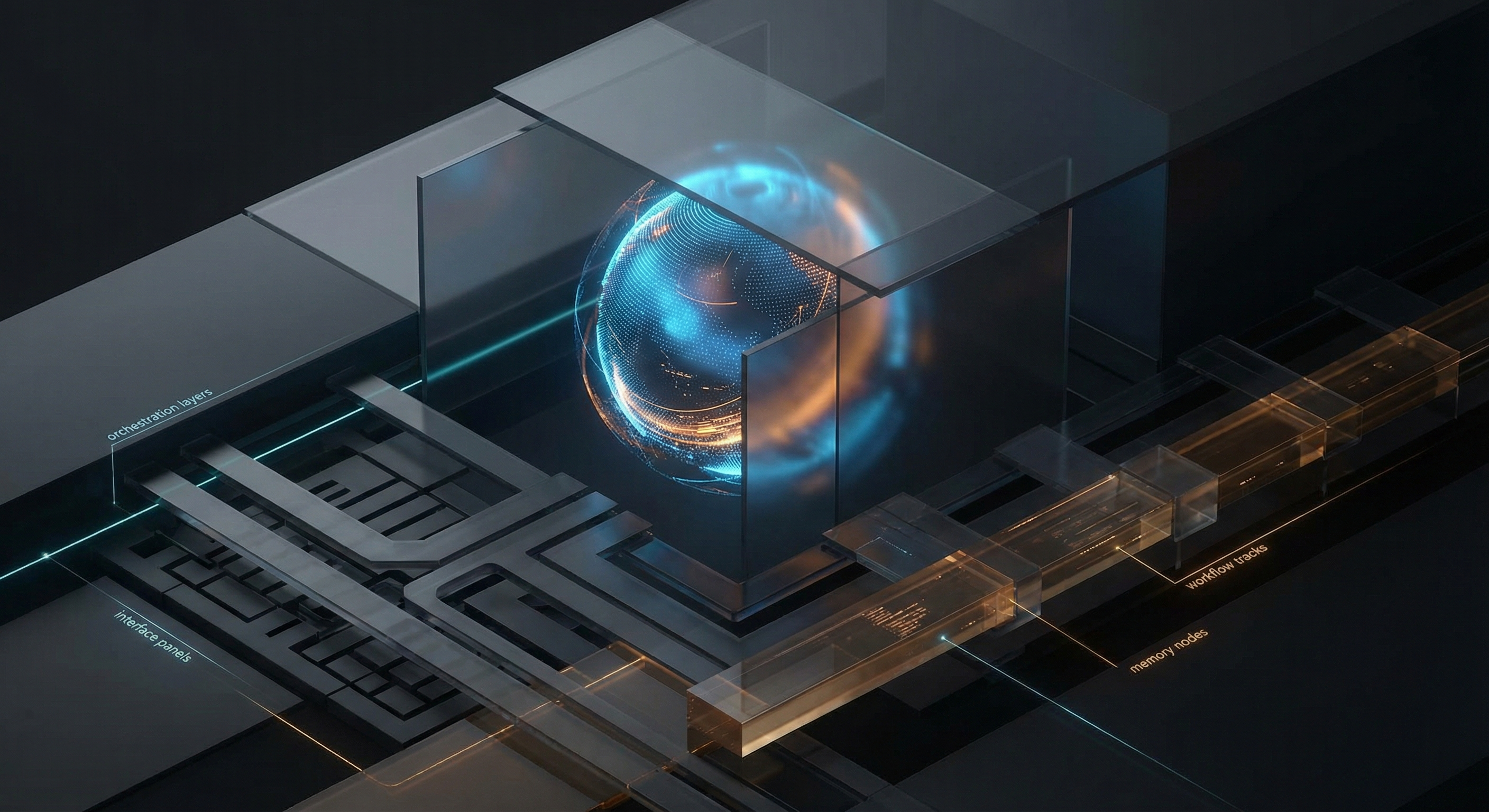

What increasingly shapes the real user experience is the harness.

By that I mean the environment the model operates inside. The interface. The memory model. The orchestration logic. The approval flows. The tool permissions. The runtime behaviour. The integrations. The way work gets delegated, resumed, reviewed and shipped.

That layer used to feel like packaging.

Now it is starting to look a lot more like the product.

And from there, it is not a big leap to the next conclusion: the harness is becoming the business model.

That is why moves like this matter more than they first seem to.

When a provider starts separating direct usage from usage through third-party harnesses, they are not just adjusting monetization. They are drawing a line around where they think value should accrue. Not only to the model, but to the environment that operationalizes the model.

That makes strategic sense.

As models get better and, over time, more interchangeable, the leverage moves outward. Away from raw model access and toward workflow control. The value does not disappear. It relocates.

That is where stickiness starts to live.

Not only in which model you use, but in where work starts, how context is loaded, what permissions exist, how state is preserved, who verifies outputs, what gets automated, what gets remembered, and how all of it fits into a repeatable operating loop.

This is also why I think a lot of companies are underestimating vendor dependency in the AI era.

They think they are adopting a model.

In practice, they are often adopting a harnessed operating surface shaped by someone else’s pricing logic, policy decisions, incentives and product boundaries.

That is a much deeper dependency than most teams realize.

The model may still be the engine. But the harness is becoming the steering wheel, the dashboard, the gearbox and, increasingly, the monetization layer too.

That is where power is consolidating.

If you are serious about building AI capability inside a company, I think this is one of the shifts that matters most right now. The question is no longer only which model you choose. It is which operating surface you are willing to build on, how much of it you actually control, and what happens when the owner of that surface changes the rules.

That is not a tooling detail.

It is strategy.