KPMG Proved It: Process-First Beats Tool-First

KPMG's AI agent playbook shows organizations that redesign processes before deploying agents see 20% better ROI. Only 11% do it. The rest bolt agents onto broken workflows and wonder why nothing changes.

KPMG published their AI agent playbook last month, and one number stood out: organizations that redesign their processes before deploying AI agents see 20% better ROI than those that don't. Twenty percent. Not from better models, not from fancier tooling, not from bigger budgets. From rethinking the work itself before handing it to machines.

This is the kind of finding that should reshape how every enterprise approaches agentic AI. It won't, of course. Because rethinking processes is hard, politically messy, and requires the kind of organizational honesty that most leadership teams actively avoid.

The numbers tell a clear story

According to KPMG, only 11% of organizations qualify as "leaders" in their agentic AI maturity model. These are the ones doing process redesign first, building governance structures, and thinking about orchestration before they think about deployment. The other 89% are doing what enterprises always do: bolting new technology onto existing workflows and hoping for transformation.

McKinsey's numbers paint a similar picture. Roughly 10% of companies attempting AI agent initiatives manage to scale them beyond pilot. The rest get stuck in what's best described as "demo purgatory," where something works in a controlled environment but collapses the moment it touches real organizational complexity.

That 11% and that 10% are probably the same organizations. They share a characteristic that has nothing to do with technical sophistication: they're willing to question the process before automating it.

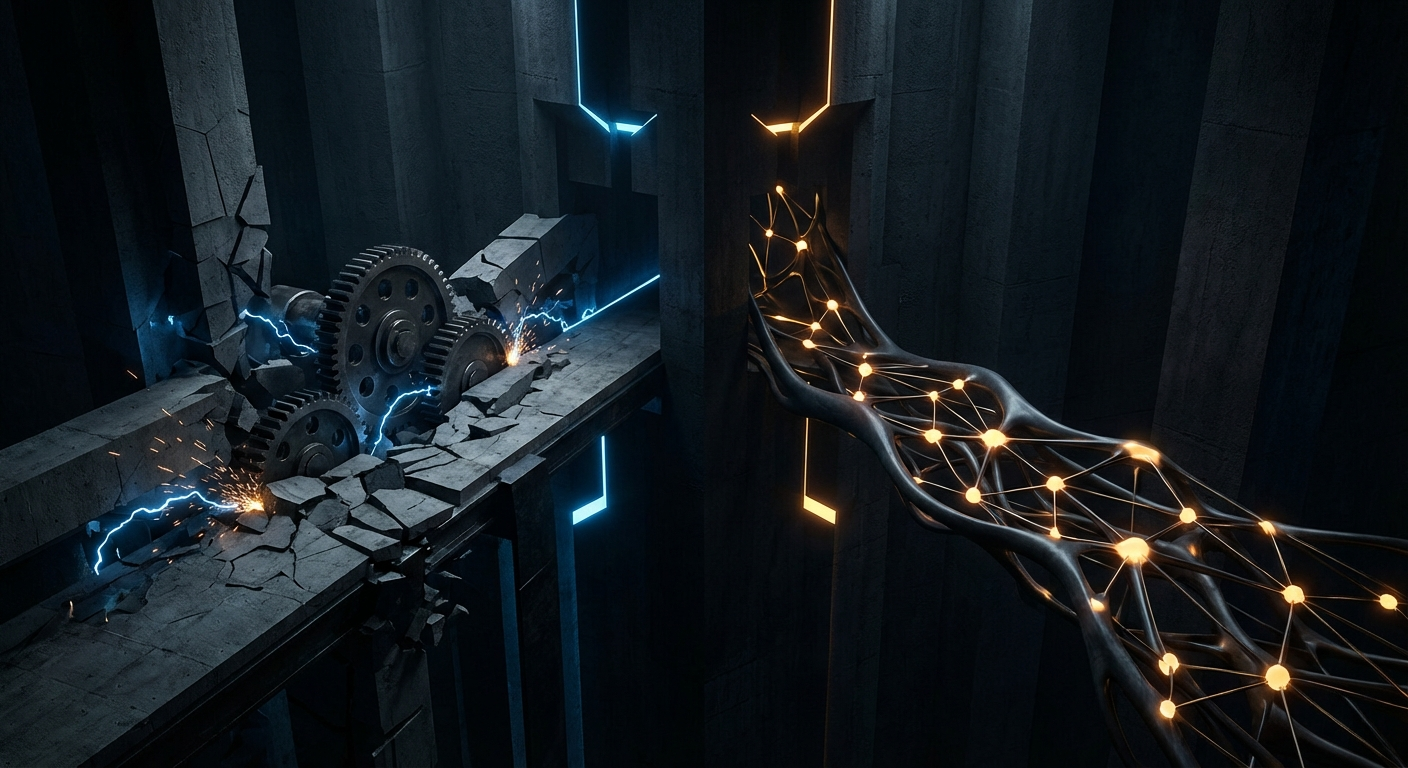

Process-first versus tool-first

The difference between these two approaches is fundamental, and it's worth being precise about what each one actually means.

Tool-first says: "We have this workflow. Let's add an AI agent to make it faster." The workflow stays the same. The roles stay the same. The handoffs, approval chains, and information flows all remain intact. An agent just gets inserted somewhere in the middle to do something a human used to do, usually faster and cheaper.

Process-first says: "We have this outcome we're trying to achieve. Given that we now have agents capable of autonomous execution, what's the best way to get there?" The workflow might change completely. Roles shift. Some handoffs disappear because they only existed due to human bandwidth limitations. Information flows get restructured because agents can process things in parallel that humans had to do sequentially.

The distinction is between automating what exists and rethinking what should exist.

Here's why this matters so much for agents specifically. Traditional automation, like RPA, could get away with the tool-first approach because it was operating at the task level. You had a human clicking through screens, and you replaced the clicking with a bot. The process didn't need to change because the bot was doing the exact same atomic actions.

Agents are different. They operate at the workflow level. They can make decisions, coordinate with other agents, handle exceptions, and adapt to context. When you constrain an agent to follow a process designed around human limitations, you're using a jet engine to power a bicycle. It works, technically. But you're leaving most of the capability on the table.

Where the gains concentrate

KPMG's data shows something else worth paying attention to: the largest ROI gains aren't coming from the use cases that get the most press. They're concentrated in operations, supply chain, and finance, not in customer-facing chatbots or content generation.

This makes sense when you think about it. Operations and supply chain are exactly the domains where process complexity is highest, where multiple systems need to coordinate, and where the potential for multi-agent orchestration is greatest. A single agent answering customer questions is useful but limited. Five agents coordinating across procurement, inventory, logistics, and vendor management while a sixth agent monitors the whole system for anomalies is where the architecture starts delivering compound returns.

The organizations getting 20% better ROI aren't doing it by building better individual agents. They're doing it by designing better systems of agents, and that requires process redesign because the old processes were never designed for that kind of coordination.

Multi-agent orchestration is where the real gains live. But you can't orchestrate agents across a process that was designed for humans passing documents to each other through an approval chain. The process has to change first. The agents have to be designed into the workflow, not grafted onto it.

The pattern is always the same

Anyone who's been through enough digital transformations will recognize what's happening here. The pattern is identical to what played out during CMS migrations, cloud transitions, and every other major technology shift of the last fifteen years.

The technology is never the bottleneck. Organizational design is.

During the headless CMS wave, the companies that succeeded weren't the ones that picked the best platform. They were the ones that restructured their content operations first. They asked: "Given that content is now decoupled from presentation, what does our editorial workflow actually need to look like?" The ones that failed just migrated their old Drupal workflows into a headless CMS and wondered why nothing felt better.

Cloud migration followed the same script. Lift-and-shift gave you a bigger bill and the same architecture. Cloud-native redesign gave you scalability and cost efficiency. Same technology, radically different outcomes, entirely determined by whether you were willing to rethink the work.

AI agents are repeating this pattern at higher speed and higher stakes. The technology is more capable than anything that came before it. The organizational resistance to change is exactly the same as it's always been. And the gap between leaders and laggards is widening faster because agents amplify whatever you point them at. Point them at a well-designed process, and you get compound efficiency. Point them at a broken one, and you get compound dysfunction.

That's the part KPMG's 20% number doesn't fully capture. The difference between process-first and tool-first isn't just 20% ROI. It's the difference between building a system that gets better over time and building one that calcifies dysfunction at machine speed.

What this means practically

If you're leading an AI initiative right now, the temptation is always to start with the tool. Pick an agent framework, run a pilot, show quick results to leadership, get more budget. That path is seductive because it generates visible activity fast. It also leads directly into the 89% that can't scale.

The alternative is harder to sell internally but dramatically more effective: before deploying a single agent, map the process you're targeting. Question every handoff. Ask why each step exists. Identify which steps exist only because of human bandwidth constraints that agents eliminate. Redesign the process for the capabilities you now have, not the capabilities you had when the process was created.

Then design your agents into that new process. Give them clear roles, clear boundaries, and clear interfaces with both humans and other agents. Build verification loops where humans validate output at the points that matter most. Make the system measurable so you can see what's working and what needs calibration.

KPMG's data validates something that should be obvious but apparently needs $200/hour consultants to make credible: the system design matters more than the components. The 11% who lead aren't leading because they have better agents. They're leading because they did the organizational work that everyone else skipped.

The question isn't whether your organization will deploy AI agents. Every organization will. The question is whether you'll redesign your processes to deserve them.