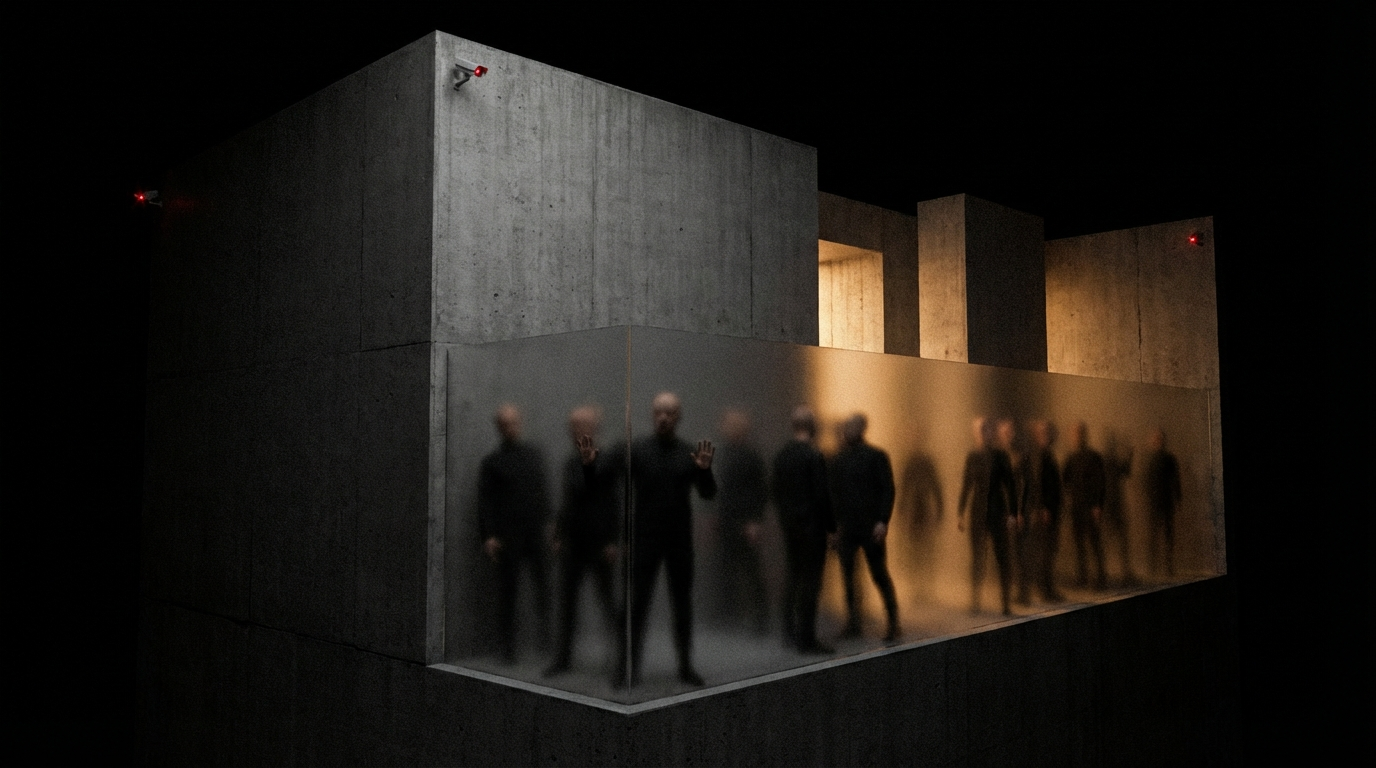

466% More AI Agents Inside Your Enterprise Than Last Year. Most of Them You Didn't Deploy.

BeyondTrust's latest data shows a 466% surge in AI agents operating inside enterprises — most of them undeployed by IT. Shadow IT just became shadow agents, and the governance gap is massive.

A few weeks ago, BeyondTrust's Phantom Labs dropped a number that should make every technology leader pause: 466.7% year-over-year growth in AI agents operating inside enterprise environments. Not agents deployed by IT. Not agents approved through procurement. Agents that showed up on their own — via SaaS tools, browser extensions, and employees tinkering with the latest AI features.

I've been thinking about this number a lot. Not because it's shocking — honestly, if you've been paying attention, it's exactly what you'd expect — but because of what it reveals about where we are in the adoption curve. We've crossed a threshold. Shadow IT just became shadow agents.

We've seen this movie before

Remember when Dropbox was the enemy? Around 2013-2014, CISOs were losing sleep over employees syncing company files to personal cloud accounts. The response was policies, MDM software, approved vendor lists. Shadow IT became a category. Entire governance frameworks were built around it.

That era feels quaint now.

The difference between someone using personal Dropbox and an AI agent operating inside your Slack workspace is the difference between a filing cabinet and an employee. A filing cabinet stores things. An employee reasons, plans, and acts. That's what agents do. They don't just hold data — they process it, make decisions based on it, and take actions with real consequences.

My take: most enterprises haven't internalized this distinction yet. They're still applying the Dropbox-era mental model to an agent-era problem.

The governance gap is real

Here's what I see when I talk to technology leaders at large organizations. Most of them have some version of an AI strategy. They've approved a handful of tools. Maybe they've rolled out Copilot. They feel like they have a handle on things.

But when I ask them: "Do you have an inventory of every AI agent currently operating inside your environment?" — the answer is almost always no. When I ask: "Do you know what data those agents can access?" — silence. "What actions can they take autonomously?" — nervous laughter.

This isn't a criticism. It's a reflection of how fast the landscape shifted. Twelve months ago, most AI tools were glorified autocomplete. Today, agents are embedded in project management tools, CRM platforms, email clients, design software, and development environments. They're reading your data, summarizing your meetings, drafting your emails, and in some cases making decisions on your behalf.

And the vast majority of them were never formally deployed. They came bundled. They were turned on by default. An employee clicked "enable AI features" without thinking twice. A SaaS vendor shipped an agent update in a Tuesday release. Nobody noticed because nobody was looking.

Threat models built for humans don't work for agents

I think this is the crux of it. Enterprise security was designed around two types of actors: human users and API integrations. We know how to manage both. Humans get roles, permissions, and access reviews. APIs get keys, scopes, and rate limits.

Agents are neither. They're something new — autonomous entities that can reason about context, chain multiple actions together, and operate with a degree of independence that neither a human user nor a traditional API ever had. They exist in a gray zone that current security architectures weren't designed to handle.

Think about it concretely. An AI agent inside your CRM can read customer data, draft communications, and trigger workflows. What's its permission boundary? Is it the same as the employee who enabled it? Broader? Narrower? Who audits what it did last Tuesday? If it hallucinated a customer name into a report that got sent to leadership, whose accountability is that?

These aren't hypothetical questions anymore. With a 466% growth rate, they're operational realities.

Agent identity is the new frontier

In my experience, the organizations that will navigate this well are the ones thinking about agent identity as a first-class concept — not an afterthought bolted onto existing IAM systems.

What does that mean in practice? A few things:

Agent inventory. You need to know what's running. Not just the tools you deployed, but the agents embedded in every SaaS product your organization uses. This is harder than it sounds because vendors aren't always transparent about what their AI features actually do under the hood.

Scoped permissions. Every agent needs its own identity with explicit, auditable permissions. Not inherited from the user who enabled it. Not "same access as the team." Its own scoped, minimal, reviewable access. The principle of least privilege applies to agents even more than it does to humans — because agents don't get tired, don't take lunch breaks, and can process thousands of records in seconds.

Observability. You need to see what agents are doing in real time. Not just logs — actual behavioral monitoring. What data did it access? What actions did it take? What reasoning led to those actions? This is where the current tooling is weakest, and where I expect the biggest investment over the next 18 months.

Lifecycle management. Agents should be provisioned, reviewed, and deprovisioned just like employee accounts. When a project ends, do the agents get turned off? When a vendor loses a contract, do their embedded agents lose access? Right now, for most organizations, the answer is "we don't even know they're there."

This isn't a security problem — it's a strategy problem

I want to be clear about something. I'm not writing this to scare anyone. The 466% growth isn't inherently bad. In fact, it signals something incredibly positive: people are finding AI agents genuinely useful. Employees are adopting them because they actually help.

The risk isn't the agents themselves. The risk is the gap between adoption speed and governance maturity. That gap is where breaches happen, where data leaks, where compliance violations accumulate quietly until they become front-page problems.

My conviction is that agent governance isn't a security nice-to-have — it's the prerequisite for scaling agentic AI responsibly. The companies that build trust architectures around their AI agents will be the ones that can go big. The ones that don't will either move too slowly (afraid of the risk) or too fast (unaware of the risk). Both lose.

What I'd do on Monday morning

If I were a CTO or CISO reading this, here's where I'd start:

- Run an agent census. Audit every SaaS tool in your stack for embedded AI features and agents. You'll be surprised.

- Classify by risk. Which agents have access to sensitive data? Which ones can take actions? Prioritize those.

- Establish agent identity standards. Start simple — unique identifiers, scoped permissions, activity logs.

- Talk to your vendors. Ask them explicitly: what AI agents are running inside your product in our environment? What data do they access? What can they do?

- Make it a leadership conversation. This isn't just an IT problem. It's a strategic capability question. Get it on the executive agenda.

The 466% number is a signal. Not of danger, but of momentum. The question is whether your organization is steering that momentum or just riding it.

I think the answer, for most enterprises right now, is the latter. And that needs to change — fast.